Second, it is very common to use more than one technology to improve performance and accessibility. In large organizations, communication and documentation are too high an overhead to implement these techniques successfully. Therefore, the data team has to manually communicate the existence of the optimized copies of the data to the analysts. Two factors make it hard to use these techniques in the Hadoop ecosystem.įirst of all, a survey of the popular open-source (Presto, Apache Hive, and Spark SQL) and commercial analytic databases (Amazon Redshift, HP Vertica, EMC Greenplum) showed that none of them supported materialized views. These techniques are not new and have been known for a long time in the database world. The improvement in query performance of using denormalized tables is up to 66%. The graph below shows the performance improvement of queries that read the denormalized table of store_sales and items.

If there is a copy of the join result of these two tables, then each of the queries requires one less stage. In TPCDS queries, store_sales and items table are joined in a few queries. So if a table contains such unused columns, it is helpful to keep a copy of the data with just the popular columns in a table. However, extra columns do affect the performance of a query. Extra int columns do not have a detrimental effect on performance. The graph below shows the effect of extra columns in a table. If the analysts had access to narrow versions of these tables, then each query would require much less I/O. Below is a sample of our findings, along with anonymized table names: The customer submitted more than 130,000 queries in 2 months. We analyzed the workload of one of Qubole’s largest customers. OLAP queries do not access all columns in a table. Using the sorted tables increased the performance by as much as 78%. In this benchmark, we sorted by ss_sold_date_sk, ss_item_sk since the data is not partitioned. The graph below shows the performance improvement in some of the queries in TPCDS that filter and join on ss_sold_date_sk and ss_item_sk. This allows the readers to use binary search and eliminate blocks. Instead, we can sort the data on ss_item_sk and store it in a format like ORC or Parquet. However, it is not possible to partition on ss_item_sk since it has too many unique values. The next step is to focus ss_item_sk since it is used in join clauses. The first step is to partition on ss_sold_date_sk since it is used in the filter and join clauses. For example, consider the store_sales table in TPC-DS.

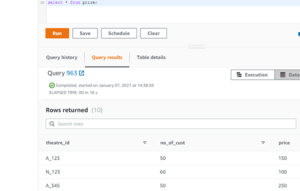

However, there are situations when it is not possible to partition these columns. The best practice is to partition data on these columns which will help the SQL engine parallelize the execution of the query. The access path of columns that are used in Filter, Join, and Group By clauses has a substantial effect on the run time of a query. The techniques listed below are applicable regardless of the SQL engine or data format.The timings are the best of three runs.Presto SQL engine running on 4-node r3.xlarge instances.Later, we talk about how one can use Quark (which we detailed in a previous post) to easily implement these performance optimizations in a Big Data analytics stack.Īll performance numbers were obtained on the following setup: First, we will explore the techniques and benchmark some sample results. This blog post explores how queries can be sped up by keeping optimized copies of the data.

0 Comments

Leave a Reply. |

RSS Feed

RSS Feed